The two groups - Campaign for a Commercial-Free Childhood and the Center for Digital Democracy - said they could use the app to find videos that were extremely disturbing and "potentially harmful for young kids to view."

Among the objectionable content, the groups cited explicit sexual language in cartoons; jokes about pedophilia and drug use; activities such as juggling knives, tasting battery acid, and making a noose; and adult discussions about family violence, pornography, and child suicide, according to a Wall Street Journal blog post.

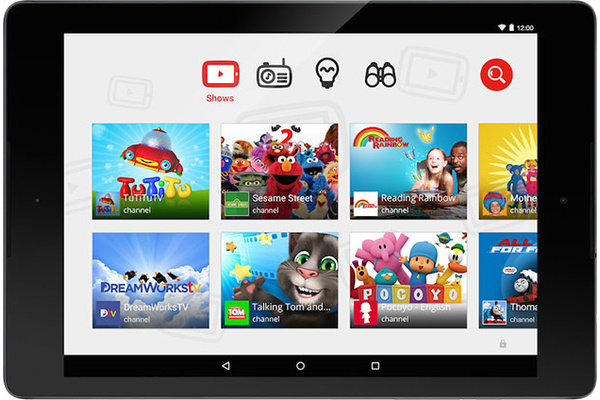

"Google promised parents that YouTube Kids would deliver appropriate content for children, but it has failed to fulfill its promise," said attorney Aaron Mackey.

Google said it works hard to make sure that the videos that can be accessed through the app are as kids-friendly as possible. It uses automatic filters, user feedback and reviews to determine if access to content should be removed.

Parents do have the ability to disable the search function in the app, which drastically limits the available content for kids to view.

Written by: James Delahunty @ 19 May 2015 17:28